简介

梯度下降是迭代法的一种,可以用于求解最小二乘问题(线性和非线性都可以)。在求解机器学习算法的模型参数,即无约束优化问题时,梯度下降(Gradient Descent)是最常采用的方法之一,另一种常用的方法是最小二乘法。在求解损失函数的最小值时,可以通过梯度下降法来一步步的迭代求解,得到最小化的损失函数和模型参数值。反过来,如果我们需要求解损失函数的最大值,这时就需要用梯度上升法来迭代了。在机器学习中,基于基本的梯度下降法发展了两种梯度下降方法,分别为随机梯度下降法和批量梯度下降法。

梯度

梯度的本意是一个向量(矢量),表示某一函数在该点处的方向导数沿着该方向取得最大值,即函数在该点处沿着该方向(此梯度的方向)变化最快,变化率最大(为该梯度的模)。

- 在单变量的函数中,梯度其实就是函数的微分,代表着函数在某个给定点的切线的斜率

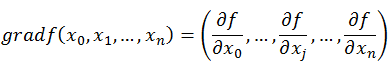

- 在多变量函数中,梯度是一个向量,向量有方向,梯度的方向就指出了函数在给定点的上升最快的方向

三变量

多变量

梯度下降算法

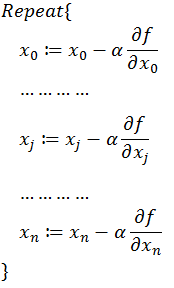

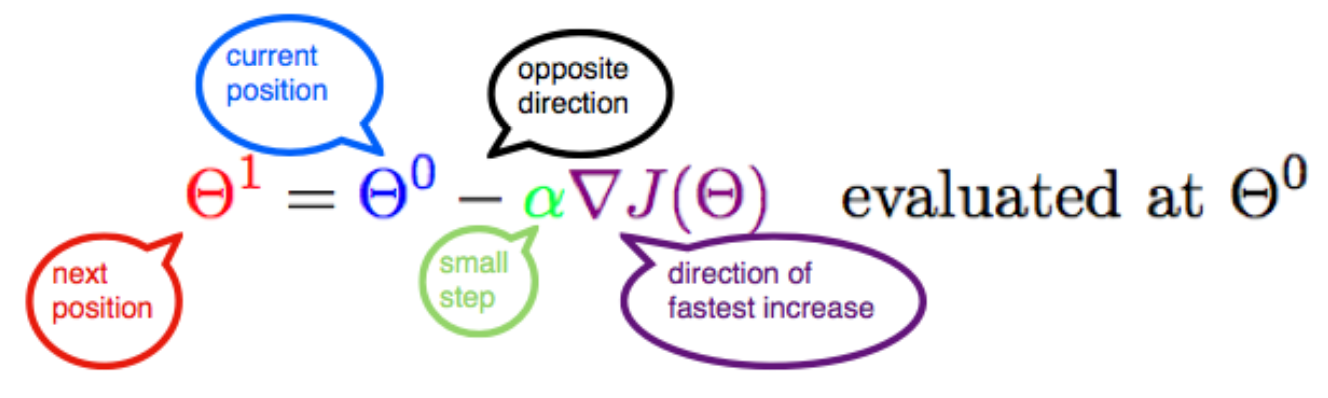

流程

此公式的意义是:J是关于Θ的一个函数,我们当前所处的位置为Θ0点,要从这个点走到J的最小值点,也就是山底。首先我们先确定前进的方向,也就是梯度的反向,然后走一段距离的步长,也就是α,走完这个段步长,就到达了Θ1这个点!

α含义

α在梯度下降算法中被称作为学习率或者步长,意味着我们可以通过α来控制每一步走的距离,不要走太快,步长太大会错过了最低点。同时也要保证不要走的太慢,太小的话半天都无法收敛。

负号含义

梯度前加一个负号,就意味着朝着梯度相反的方向前进。梯度的方向实际就是函数在此点上升最快的方向,而我们需要朝着下降最快的方向走,自然就是负的梯度的方向,所以此处需要加上负号。

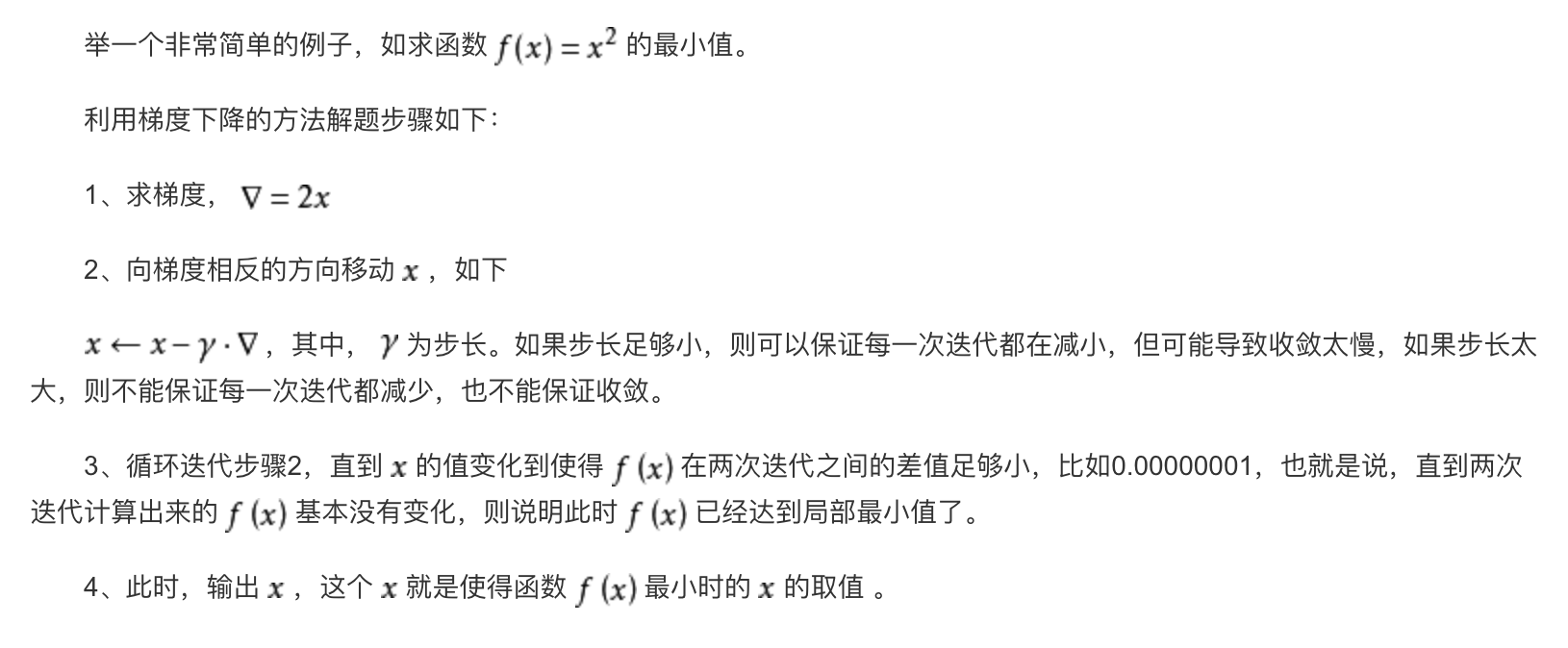

举例

缺点

- 参数调整缓慢(谷底较平时出现的问题)

- 收敛于局部极小值

随机梯度下降

每次更新都需要遍历所有data,当数据量太大或者一次无法获取全部数据时,这种方法并不可行。

解决这个问题基本思路是:只通过一个随机选取的数据(xn,yn) 来获取“梯度”,以此对w 进行更新。这种优化方法叫做随机梯度下降。

It is a iterative method for optimizing a differentiable objective function, a stochastic approximation of gradient descent optimization. It is called stochastic because samples are selected randomly(or shuffled) instead of as a single group (as in standard gradient descent) or in the order they appear in the training set.

Note that in each iteration (also called update), only the gradient evaluate at a single point Xi instead of evaluating at the set of all samples.

The key difference compared to standard (Batch) Gradient Descent is that only on piece of data from the dataset is used to calculate the step and the piece of data is picked randomly at each step.

参考文献

- https://www.jianshu.com/p/c7e642877b0e

- https://blog.csdn.net/walilk/article/details/50978864

这个有源码嘛?想学习一下

可以参考TensorFlow的源码